Artificial intelligence is rapidly transforming medical diagnosis — but how accurate is it really? This article breaks down the latest AI medical diagnosis accuracy statistics for 2026, drawing from peer-reviewed research published in NIH, JAMA, and NPJ Digital Medicine. You’ll learn how AI performs across key specialties like radiology, pathology, cardiology, and dermatology; how it compares head-to-head with human physicians; and where it still falls short. We also cover real-world adoption rates, FDA approval data, key risks like algorithmic bias and LLM hallucination, and best practices for safe clinical deployment. Whether you’re a clinician, patient, or healthcare decision-maker, this guide gives you the data you need to understand where AI-assisted diagnosis stands today — and where it’s heading.

Introduction: Why AI Diagnostic Accuracy Matters in 2026

If you’ve searched for AI medical diagnosis accuracy statistics in 2026, you’re likely a clinician evaluating new tools, a patient curious about AI-assisted care, a healthcare administrator weighing technology investments, or a researcher tracking the state of the science. This article is written for all of you.

Artificial intelligence is no longer a futuristic concept in medicine — it is already embedded in radiology suites, pathology labs, emergency departments, and primary care workflows. But how accurate is AI at diagnosing disease, really? Does it outperform physicians? Where does it fall short? And what do the most current peer-reviewed statistics actually say?

This evidence-based guide covers the latest AI medical diagnosis accuracy statistics for 2026, drawing from NIH-published meta-analyses, PMC peer-reviewed studies, FDA approval data, and clinical trial outcomes. We break down performance by specialty, compare AI against human clinicians, and explain what these numbers mean for patient safety and the future of healthcare.

Introduction: Why AI Diagnostic Accuracy Matters in 2026

If you’ve searched for AI medical diagnosis accuracy statistics in 2026, you’re likely a clinician evaluating new tools, a patient curious about AI-assisted care, a healthcare administrator weighing technology investments, or a researcher tracking the state of the science. This article is written for all of you. Artificial intelligence is no longer a futuristic concept in medicine — it is already embedded in radiology suites, pathology labs, emergency departments, and primary care workflows. But how accurate is AI at diagnosing disease, really? Does it outperform physicians? Where does it fall short? And what do the most current peer-reviewed statistics actually say? This evidence-based guide covers the latest AI medical diagnosis accuracy statistics for 2026, drawing from NIH-published meta-analyses, PMC peer-reviewed studies, FDA approval data, and clinical trial outcomes. We break down performance by specialty, compare AI against human clinicians, and explain what these numbers mean for patient safety and the future of healthcare.| Key takeaway: AI diagnostic tools show accuracy rates between 76% and 94% across imaging-heavy specialties, often surpassing average physician performance — but expert clinicians still outperform general-purpose AI models in complex cases. |

| 76–94% AI Imaging Accuracy vs. 65–78% for humans | 66% Physicians Using AI as of 2024 (↑78% YoY) | 340+ FDA-Approved AI Tools in active clinical use |

What Is AI Medical Diagnosis? A Definition

AI medical diagnosis refers to the use of machine learning (ML), deep learning (DL), and natural language processing (NLP) algorithms to analyze clinical data — including medical images, electronic health records (EHRs), genomic sequences, and patient history — to identify, classify, or predict disease.Primary AI Diagnostic Approaches

- Computer Vision / Deep Learning for medical imaging (X-rays, MRIs, CT scans, pathology slides)

- Large Language Models (LLMs) for text-based clinical vignette analysis and differential diagnosis

- Predictive Analytics integrating EHR data, lab values, and vital signs

- Multimodal AI combining imaging with genomic and biometric data

AI Medical Diagnosis Accuracy Statistics 2026: By Specialty

Radiology and Medical Imaging

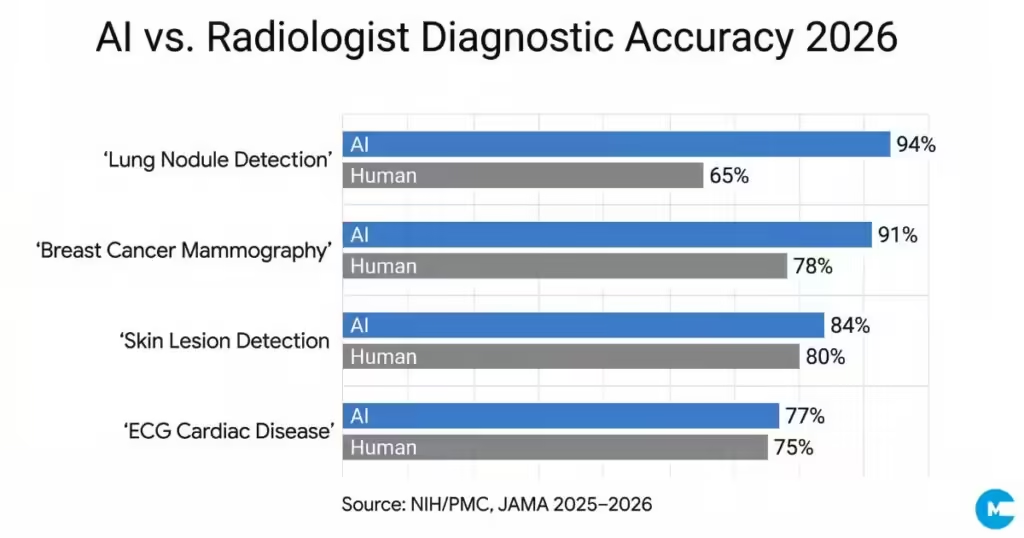

Radiology represents the most mature domain for AI diagnostics, and the statistics reflect this. A landmark collaboration between Massachusetts General Hospital and MIT produced an AI system achieving 94% accuracy in detecting lung nodules from CT scans — significantly outperforming human radiologists who scored 65% accuracy on the same dataset. In breast cancer detection, a South Korean study found AI-based diagnosis achieved 90% sensitivity in detecting breast cancer masses and 91% accuracy in early-stage breast cancer detection, compared to radiologist performance of approximately 78%. Across broader imaging and clinical vignettes, AI demonstrates diagnostic accuracy between 76% and 90%, frequently surpassing average physician performance of 73–78% on tasks such as mammography interpretation and skin lesion detection.

Pathology

In computational pathology, AI has demonstrated strong performance in tissue classification and mitotic figure detection. A 2025 peer-reviewed study in the PMC found that in radiology and pathology combined, AI improved accuracy and reduced diagnostic time by approximately 90% or more compared to conventional methods — a finding from a systematic review of 51 articles published between 2019 and 2024.

FDA-approved whole-slide imaging (WSI) systems using AI for primary diagnosis have shown particular promise in oncology, where identifying subtle histological patterns is time-consuming and subjective.

Cardiology

A recent multicenter study found that a deep learning ECG model (EchoNext) outperformed cardiologists at detecting structural heart disease from 12-lead ECGs, achieving 77.3% accuracy versus physician accuracy in the same classification task. AI-powered ECG analysis is among the fastest-growing areas of applied medical AI.

Dermatology

AI demonstrates impressive accuracy in diagnosing melanoma. Traditional dermatoscopy increased diagnostic accuracy from approximately 65–80% to 75–84%. AI-assisted dermoscopy adds a further layer of precision, with deep learning models achieving accuracy comparable to or exceeding board-certified dermatologists in controlled study conditions.

General Medicine and Large Language Models (LLMs)

The picture becomes more nuanced when examining general-purpose AI and LLMs applied to broad diagnostic tasks. A 2025 systematic review and meta-analysis published in NPJ Digital Medicine, analyzing 83 studies, found that generative AI models achieved an overall diagnostic accuracy of 52.1% — comparable to non-expert physicians but significantly lower than expert physicians (p = 0.007).

A separate systematic review in JMIR Medical Informatics (2025), analyzing 4,762 cases across 19 LLMs, found that the accuracy of the primary diagnosis for the optimal model ranged from 25% to 97.8%, depending on the clinical specialty and case complexity. Triage accuracy ranged from 66.5% to 98%.

Important nuance: AI accuracy statistics vary dramatically by task type, specialty, and whether the model is a specialized deep learning system or a general-purpose LLM. Specialized imaging AI consistently outperforms generalist models. |

AI vs. Physician: How Does AI Compare to Human Clinicians?

One of the most searched questions in this space is whether AI outperforms doctors. The honest answer in 2026 is: it depends on the task.

Specialty | AI Accuracy (2025–2026) | Physician Accuracy |

|---|---|---|

Lung Nodule Detection (CT) | 94% | 65% (radiologists) |

Breast Cancer (Mammography) | 90–91% | 78% (radiologists) |

Skin Lesion / Melanoma | 75–84% (with AI dermoscopy) | 65–84% (dermatoscopy) |

ECG / Cardiac Structural Disease | 77.3% | Comparable cardiologists |

General Clinical Vignettes (LLMs) | 52.1% (generative AI) | ~73% (non-expert MDs) |

Radiology + Pathology Combined | 90%+ efficiency gain | Baseline |

A multicenter randomized clinical vignette survey study published in JAMA (PMC10731487) found that clinicians’ baseline diagnostic accuracy without AI input was 73.0%. When provided with standard AI model predictions, accuracy increased to 75.9% — a statistically significant improvement (p = .02). When AI predictions were accompanied by explanatory outputs, accuracy improved by 4.4 percentage points.

This suggests the greatest value of AI may not be in replacing physician judgment, but in augmenting it — particularly when AI explanations help clinicians interpret why the model flagged a finding.

AI Diagnostic Adoption Statistics: Who Is Using It and Where

Understanding how widely AI diagnosis is being adopted gives important context to the accuracy statistics.

- 66% of physicians reported using health AI in 2024, an increase of 78% compared to just 38% in 2023.

- Over 340 FDA-approved AI tools are currently in clinical use, particularly for diagnosing strokes, brain tumors, and breast cancer.

- Healthcare providers account for over 30% of AI revenue share in the healthcare sector.

- A meta-analysis found that human-AI collaboration reduced diagnostic image reading times by approximately 27% while maintaining sensitivity at roughly 1.12 times human performance alone.

- 68% of physicians reported recognizing at least some advantage of AI in patient care, up from 63% in 2023.

- The average ROI for healthcare AI is $3.20 for every $1 invested, with returns typically realized within 14 months.

Despite these figures, adoption remains uneven. Large hospitals are 1.48x more likely to adopt AI diagnostic tools than smaller facilities. Geographic disparities are also significant — hospitals in New Jersey led the U.S. with 48.94% AI adoption, while some states reported near-zero adoption rates.

Limitations of AI in Medical Diagnosis: What the Statistics Don’t Show

Accurate reporting on AI medical diagnosis requires acknowledging limitations alongside strengths. A responsible, evidence-based view includes the following concerns:

Algorithmic Bias

AI models trained on non-representative datasets can perform poorly on underrepresented populations — including patients of certain ethnicities, ages, or body types. Diagnostic AI accuracy statistics often reflect performance on the test cohort used in the original study, which may not generalize to all clinical populations.

The ‘Black Box’ Problem

Many high-performing AI systems — particularly deep learning models — cannot explain their decision-making processes in clinically meaningful terms. This lack of interpretability can reduce clinician trust and makes it difficult to identify when and why the model errs. Researchers are actively developing interpretable AI models that output explanatory reasoning alongside diagnoses.

Generative AI Hallucination

Large language models used for diagnosis can ‘hallucinate’ — generating confident-sounding but factually incorrect clinical information. This is a critical concern in high-stakes diagnostic environments. Studies note this as a major barrier to widespread LLM deployment in autonomous diagnostic roles.

Data Privacy and Regulatory Compliance

Using AI with patient data raises significant HIPAA compliance, data sovereignty, and consent issues. Regulatory frameworks vary significantly across countries, creating challenges for global deployment of AI diagnostic tools.

How Healthcare Systems Can Maximize AI Diagnostic Accuracy

Rather than framing AI as a passive tool, healthcare institutions play an active role in determining how accurately AI performs in their specific environments. The following best practices are supported by evidence:

1. Implement Human-in-the-Loop Oversight

The most effective deployments of AI diagnostic tools maintain a clinician review step rather than allowing fully autonomous AI decisions. Studies consistently show that human-AI collaboration outperforms either alone for complex diagnostic tasks.

2. Train on Diverse, Representative Datasets

Institutions should verify that the AI tools they deploy have been validated on patient populations representative of their specific patient demographics. Asking vendors for disaggregated accuracy data by age, ethnicity, and comorbidity is a critical due diligence step.

3. Select FDA-Cleared or CE-Marked Tools

With over 340 FDA-approved AI tools available in the U.S., there is little reason to deploy unvalidated systems for diagnostic purposes. FDA clearance requires clinical evidence of safety and efficacy across defined patient populations and intended uses.

4. Monitor Post-Deployment Performance

AI model performance can degrade over time — a phenomenon called ‘model drift’ — as patient populations, equipment, and clinical practices change. Systematic monitoring of AI diagnostic outputs against confirmed diagnoses is essential for maintaining accuracy standards.

5. Invest in Clinician AI Literacy

The JAMA study cited above showed that when clinicians could understand AI explanations, diagnostic accuracy improved more meaningfully than when they received AI outputs alone. Training clinicians to critically evaluate AI recommendations — rather than accept or reject them wholesale — is a high-leverage investment.

Frequently Asked Questions (FAQ)

The following questions are optimized for featured snippet appearance in search results.

Q: What is the accuracy of AI in medical diagnosis in 2026?

A: AI diagnostic accuracy in 2026 ranges widely by specialty. Specialized imaging AI achieves 76–94% accuracy in tasks like lung nodule detection and breast cancer screening, often surpassing the 65–78% average seen in human radiologist studies. General-purpose large language models show an overall diagnostic accuracy of approximately 52%, comparable to non-expert physicians but below expert clinicians.

Q: Can AI replace doctors for diagnosis?

A: No. As of 2026, AI diagnostic tools function as clinical decision support systems rather than autonomous replacements for physicians. Studies consistently show that human-AI collaboration outperforms either alone. FDA-approved AI tools are cleared for specific, defined uses and require clinician oversight. Expert physicians significantly outperform general-purpose AI in complex diagnostic scenarios.

Q: How many AI diagnostic tools are FDA-approved?

A: As of 2025–2026, over 340 AI-powered diagnostic tools have received FDA approval. These are primarily used in radiology for detecting conditions including strokes, brain tumors, and breast cancer. The number has grown substantially from fewer than 100 approvals in 2020, reflecting rapid maturation of the regulatory landscape.

Q: What percentage of doctors use AI for diagnosis?

A: According to 2024 data, 66% of physicians reported using health AI in some form — up from 38% in 2023, representing a 78% year-over-year increase. The most widely adopted application across healthcare systems is AI-assisted clinical documentation, with 100% of surveyed healthcare systems reporting at least some usage.

Q: What are the main risks of AI medical diagnosis?

A: The main risks include algorithmic bias (reduced accuracy in underrepresented populations), the ‘black box’ problem (lack of explainability), hallucination in large language models, data privacy risks, and model drift over time. These risks are why all major clinical AI applications currently require human clinician oversight and validation.

Q: Does AI improve diagnostic accuracy when used with doctors?

A: Yes. A JAMA-published randomized clinical study found that clinician accuracy increased from 73.0% to 75.9% when AI predictions were provided — a statistically significant improvement. When AI explanations were also included, accuracy improved by 4.4 percentage points, suggesting that interpretable AI outputs offer the greatest augmentation to clinical judgment.

Reputable External Sources & Citations

This article cites the following peer-reviewed and institutional sources:

- National Institutes of Health / PMC — Systematic review: “Reducing the workload of medical diagnosis through artificial intelligence” (PMC11813001, 2025).

- NPJ Digital Medicine / PMC — Meta-analysis: “A systematic review and meta-analysis of diagnostic performance comparison between generative AI and physicians” (PMC11929846, March 2025).

- JMIR Medical Informatics — “Comparing Diagnostic Accuracy of Clinical Professionals and Large Language Models: Systematic Review and Meta-Analysis” (e64963, April 2025).

- JAMA / PMC — “Measuring the Impact of AI in the Diagnosis of Hospitalized Patients: A Randomized Clinical Vignette Survey Study” (PMC10731487, 2023).

- MDPI Applied Sciences — “Artificial Intelligence in Medical Diagnostics: Foundations, Clinical Applications, and Future Directions” (Published January 10, 2026).